[ad_1]

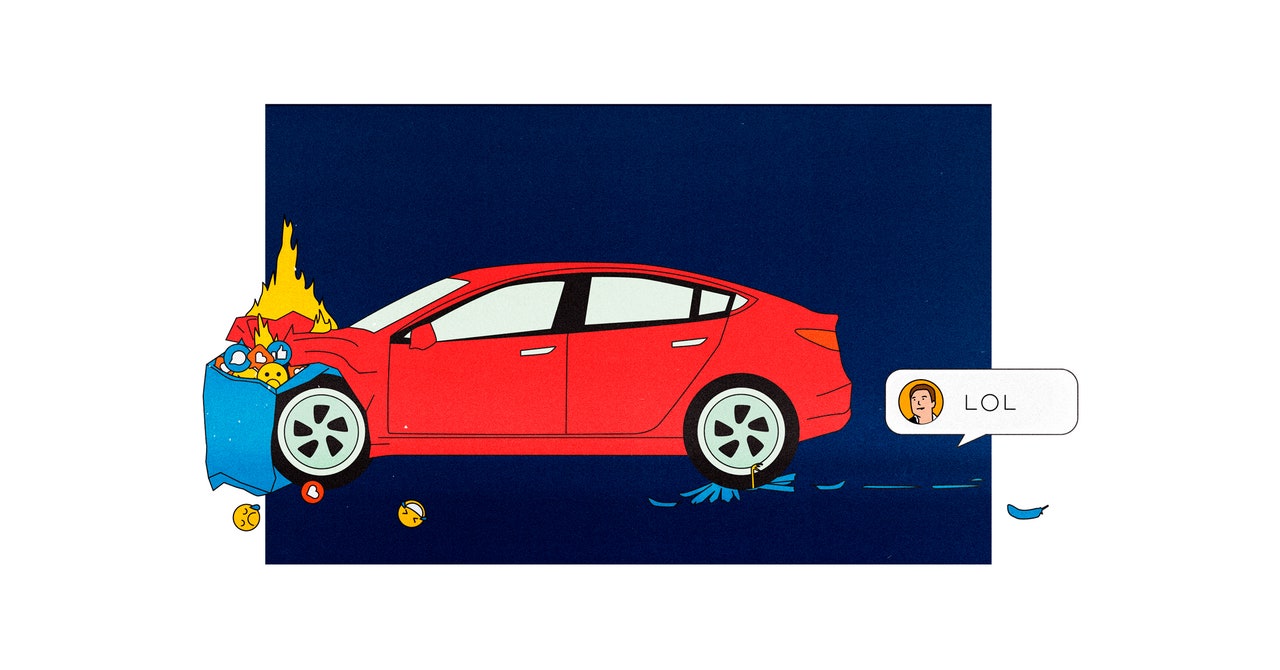

i find it a good philosophical exercise to imagine the last tweet. It could come centuries hence, when a cryptobot offers a wistful adieu to another cryptobot, or in 2025, when Donald Trump, the newly inaugurated president for life, pushes the big Electromagnetic Pulse button on the Resolute desk. Or it could come in a few months, when Elon Musk realizes that aggregating human despair has no upside, regrets plowing his electric clown car into a social media goat rodeo, and shuts the whole thing down with a single “lol.” (Way to own the libs.) What then? We’ll all move over to some Twitter replacement like Mastodon, hundreds of millions of us, and ruin that too? Sigh.

Lately it feels like the last tweet could come any day. The whole tech industry—by which I mean the cluster of companies that sell code-empowered products to billions of humans—is in extraordinary decline. The Zuckerverse has everything but users, which means Meta must come up with ever more creative ways to ruin Instagram and/or society. Microsoft, Amazon, Google—their stock charts look like Niagara Falls in profile. At least $3 trillion has ridden over the cataracts in a barrel. When your brand is infinite growth, investors don’t like to see failure. It has become possible to imagine not just the last tweet but also the day when Facebook exists only as a multi-exabyte ZIP file in archival storage, or when Googling is an interactive exhibit at the Internet History Museum.

Of course, truly giant things—at the scale of social media platforms, religions, and nation-states—don’t really die. They deflate like air mattresses, getting soft at the corners and occasionally waking you up to pump them. The entities that dominated my childhood, AT&T and the Soviet Union, seemed at one point to have given up the ghost. There was rejoicing: Now a million new innovative companies can flourish! Now democracy will spread everywhere! Both were stripped for parts—and those parts eventually recombined into new, enormous forms, like beads of mercury finding each other on a plate. A re-blobbed AT&T ended up buying a ton of things, including Time Warner, giving it control of both the piping and the content. The former USSR, well … There is always someone with a fantasy of getting the band back together, even if the consequences are terrible.

Like a lot of you, I imagine, I have been looking at this shifting world and finding the changes pretty rough to behold. Recession, authoritarianism, nuclear posturing, a weirding climate—these pop up unbidden in the feed, like the time Apple put U2’s Songs of Innocence on everyone’s iTunes without asking. When future historians write books about this era, I feel pretty sure they’ll pick titles like The Fracture, The Fraying Knot, Hope Undone, Leviathan Triumphant, A Web Unwoven, stuff like that. (If they’re Q-storians, they might go with The Gathering Storm.) Obviously they’ll include the last tweet, whatever it is. How else are they supposed to demarcate the end of the glorious web content revolution?

Personally, I’d begin and end that history with the House of Windsor. When Princess Diana died in 1997, the web was just coming into its own. Cable TV dominated, but online news—the linking between articles, the packaging of stories on homepages, the rich dithered GIFs—suddenly began to feel real and relevant. The tragedy was urgent and shocking and unscripted, and for an early web enthusiast it felt like the big leagues. But when Diana’s former mother-in-law died, a quarter of a century later, the part the internet played felt predictable. We knew to expect the tweets against colonialism and against anti-colonialism. We understood implicitly that the funeral horses would be memed. We had a vocabulary for the takes, hot takes, cancellations, and dunks. We posted through it.

r.i.p. the revolutionary internet, 1997–2022. I’m grieving a little over here. But life must go on, despite who wins the US midterm elections, who owns Twitter, and how ridiculous the metaverse might be. That’s why every morning, sometimes before breakfast, when I am in despair, I remember the three letters that always bring me comfort: PDF. And then, when I can, I go digging. I read about Gato, a new artificially intelligent agent that can caption images and play games, or the mathematics underlying misinformation, or “digital twins,” which are simulations of real-world things like cities that consulting firms seem able to sell these days. One site, scholar.archive.org, has PDFs going back to the 18th century. It’s empowering to look for this stuff instead of waiting for it to be socially discovered and jammed into my brain.

This was the original function of the web—to transmit learned texts to those seeking them. Humans have been transmitting for millennia, of course, which is how historians are able to quote Pliny’s last tweet (“Something up w/ Vesuvius, brb”). But the seeking is important, too; people should explore, not simply feed. Whatever will move society forward is not hidden inside the deflating giants. It’s out there in some pitiful PDF, with a title like “A New Platform for Communication” or “Machine Learning Applications for Community Organization.” The tech industry said we had it all figured out, but we ended up with a billionaire telling us to strap on a helmet (space or VR) while the rising seas lap our toes. So now we have to try again. Now we get to try again.

Paul Ford (@ftrain) is a programmer, award-winning essayist, and cofounder of Postlight, a digital product studio.

This article appears in the December 2022/January 2023 issue. Subscribe now.

[ad_2]

Image and article originally from www.wired.com. Read the original article here.